AI and the paperclip problem

By A Mystery Man Writer

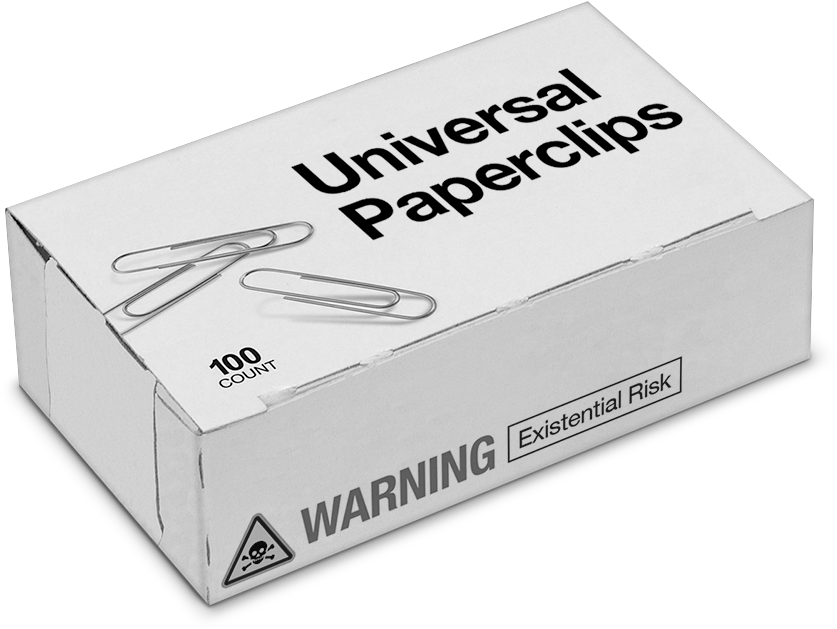

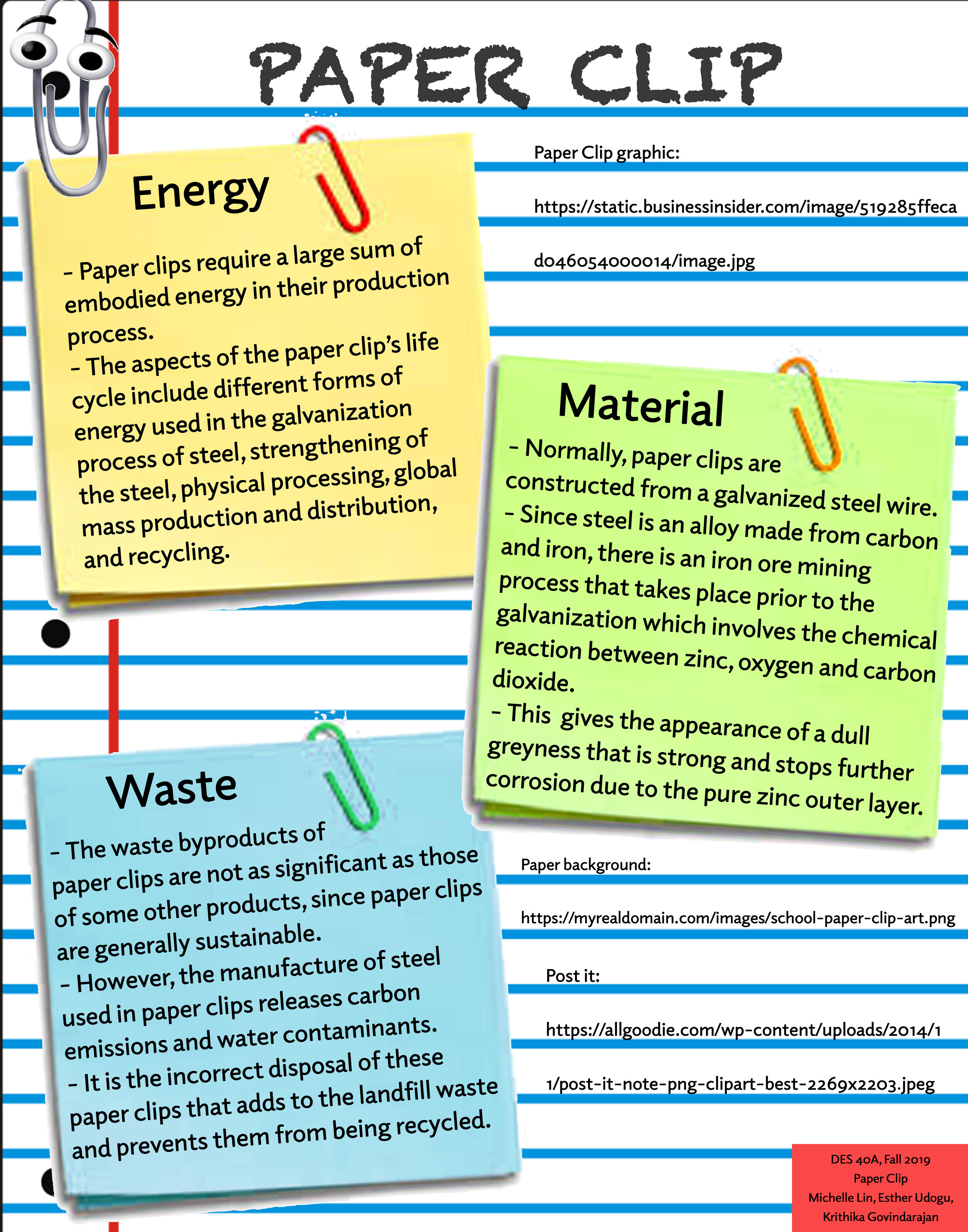

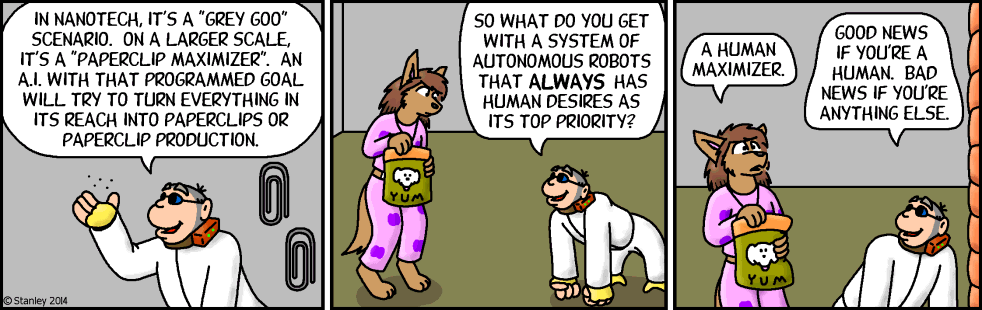

Philosophers have speculated that an AI tasked with a task such as creating paperclips might cause an apocalypse by learning to divert ever-increasing resources to the task, and then learning how to resist our attempts to turn it off. But this column argues that, to do this, the paperclip-making AI would need to create another AI that could acquire power both over humans and over itself, and so it would self-regulate to prevent this outcome. Humans who create AIs with the goal of acquiring power may be a greater existential threat.

The Paperclip Maximiser Theory: A Cautionary Tale for the Future

The Paperclip Maximiser Theory: A Cautionary Tale for the Future

Paperclip maximizer - Wikipedia

AI's Deadly Paperclips

AI and the paperclip problem

Jake Verry on LinkedIn: As part of my journey to learn more about

EN / Freefall 2537

Squiggle Maximizer (formerly Paperclip maximizer) - LessWrong

PDF) Wim Naudé

The AI Control Problem (and why you should know about it) - WeAreBrain

AI and the Trolley Problem - Reactor

Is AI Our Future Enemy? Risks & Opportunities (Part 1)