Using LangSmith to Support Fine-tuning

By A Mystery Man Writer

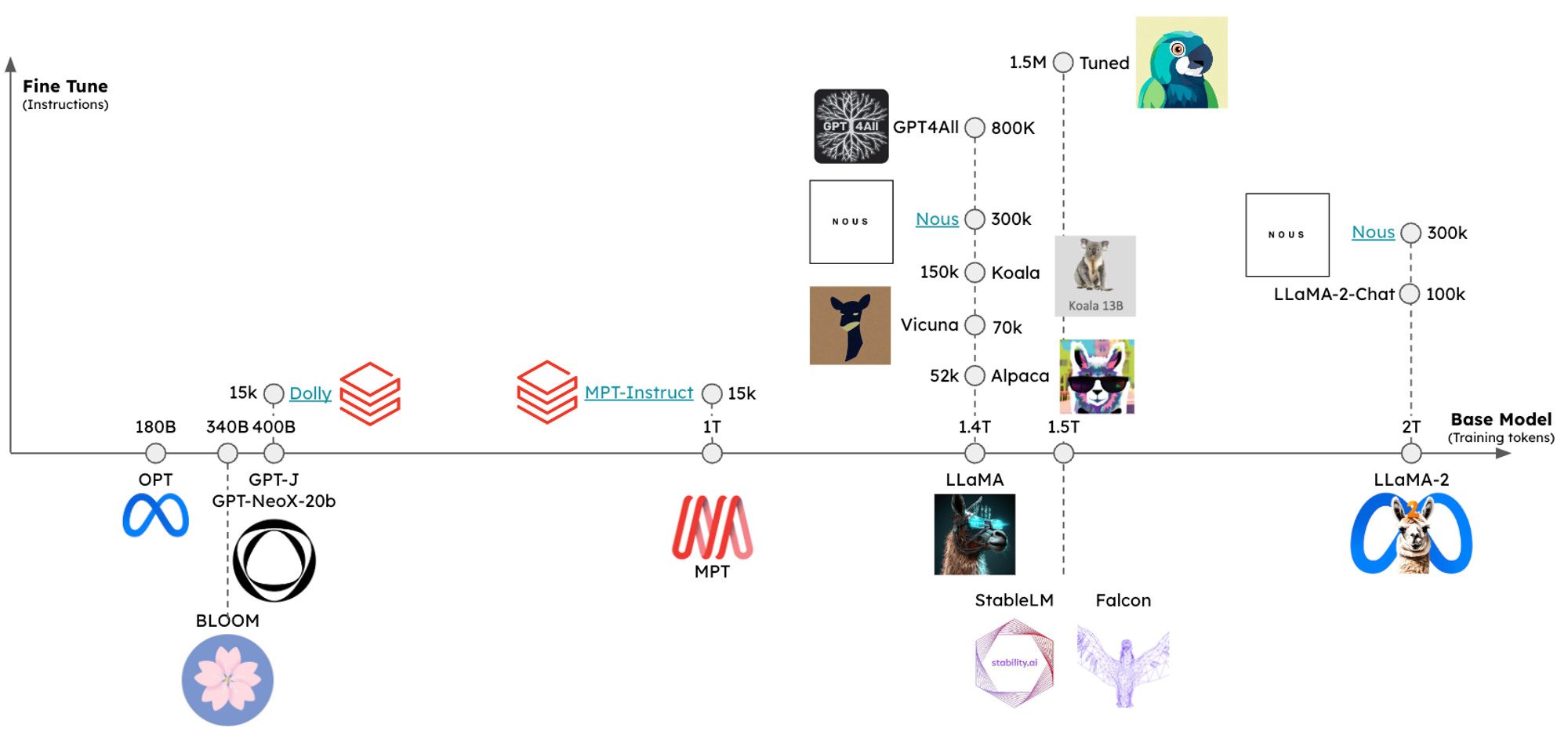

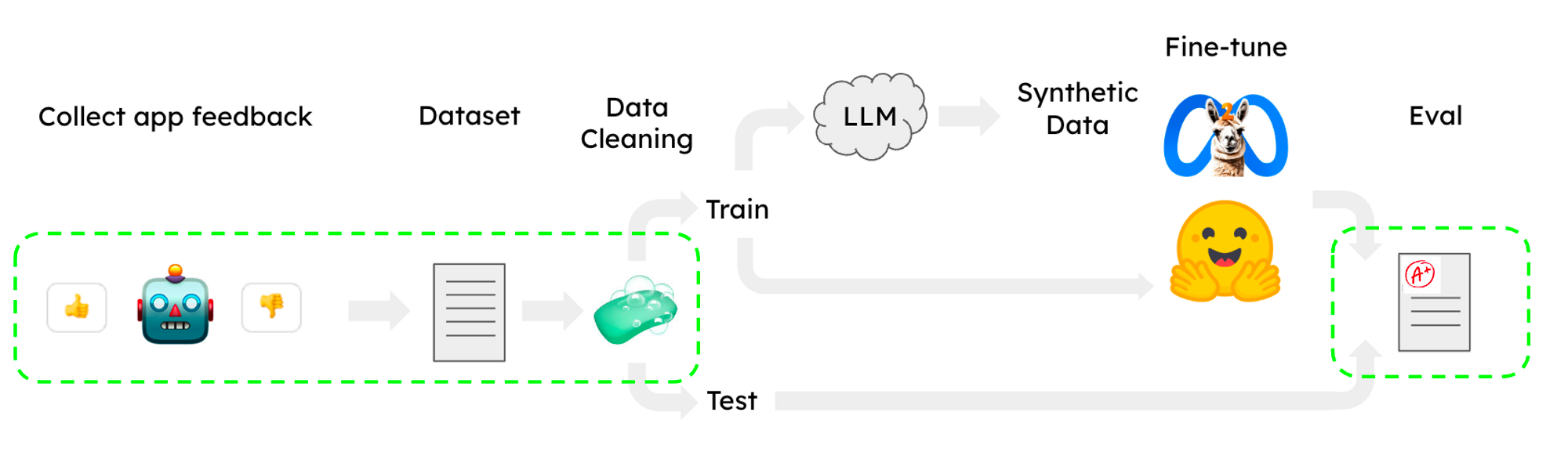

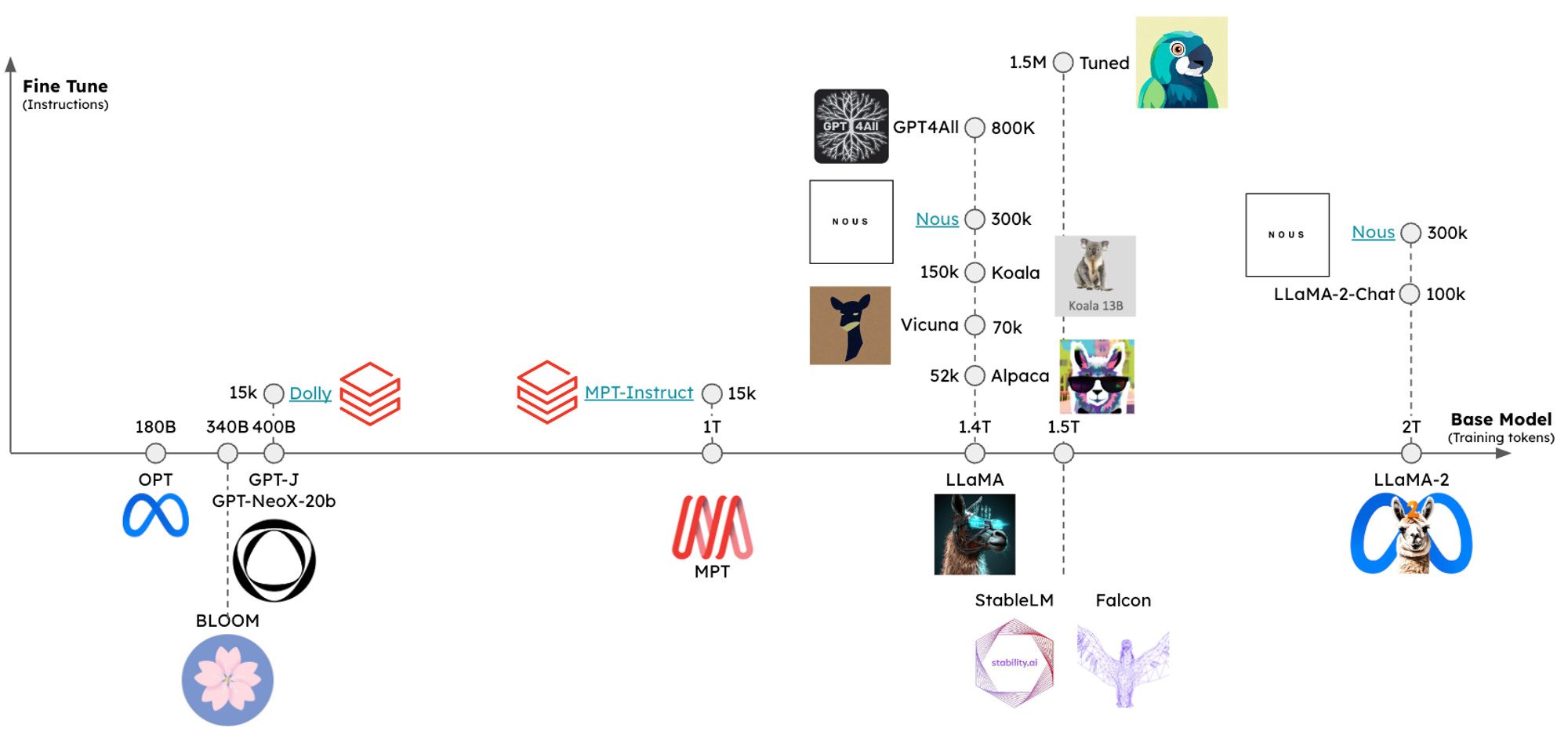

Summary We created a guide for fine-tuning and evaluating LLMs using LangSmith for dataset management and evaluation. We did this both with an open source LLM on CoLab and HuggingFace for model training, as well as OpenAI's new finetuning service. As a test case, we fine-tuned LLaMA2-7b-chat and gpt-3.5-turbo for an extraction task (knowledge graph triple extraction) using training data exported from LangSmith and also evaluated the results using LangSmith. The CoLab guide is here. Context I

Applying OpenAI's RAG Strategies - nikkie-memos

Using LangSmith to Support Fine-tuning

Nicolas A. Duerr on LinkedIn: #business #strategy #partnerships

Nicolas A. Duerr on LinkedIn: #futurebrains #platform #marketplace #strategy #innovation

Thread by @RLanceMartin on Thread Reader App – Thread Reader App

Thread by @LangChainAI on Thread Reader App – Thread Reader App

LangChainのv0.0266からv0.0.276までの差分を整理(もくもく会向け)|mah_lab / 西見 公宏

LangChainのv0.0266からv0.0.276までの差分を整理(もくもく会向け)|mah_lab / 西見 公宏

Applying OpenAI's RAG Strategies 和訳|p

Thread by @LangChainAI on Thread Reader App – Thread Reader App

Using LangSmith to Support Fine-tuning

Using LangSmith to Support Fine-tuning

Multi-Vector Retriever for RAG on tables, text, and images 和訳|p